What is the most crucial factor that has made the technology sector one of the world’s largest economies? Why are the most successful technology firms so prosperous? Many people believe that data is the key to answering these questions.

Increased data availability directly results from the internet’s meteoric rise in popularity. The value of knowledge has never diminished. Now that we have the right equipment, we can learn anything.

Gathering information requires time and effort, just like any other task. That is why autonomous data-gathering robots are gaining traction as an alternative to time-consuming manual investigations. This article’s topic is how to teach a robot to scrape a website in under two minutes without any coding experience required.

When do you scrape?

Before we delve too deeply into the subject, let’s get a basic understanding of what “scraping” is and why it’s relevant to gathering data from the web. Scraping, in its most basic definition, is the automated, unattended collection of large amounts of data.

The direction the scraper moves in is determined by how it is set up. When the process begins, there is no longer any need for a person to operate the scraper manually.

However, developing a robust scraper often necessitates substantial time and programming expertise. That doesn’t mean anything, does it? I’m going to tell you about my go-to program for quickly and easily creating scrapers that don’t require you to know how to code!

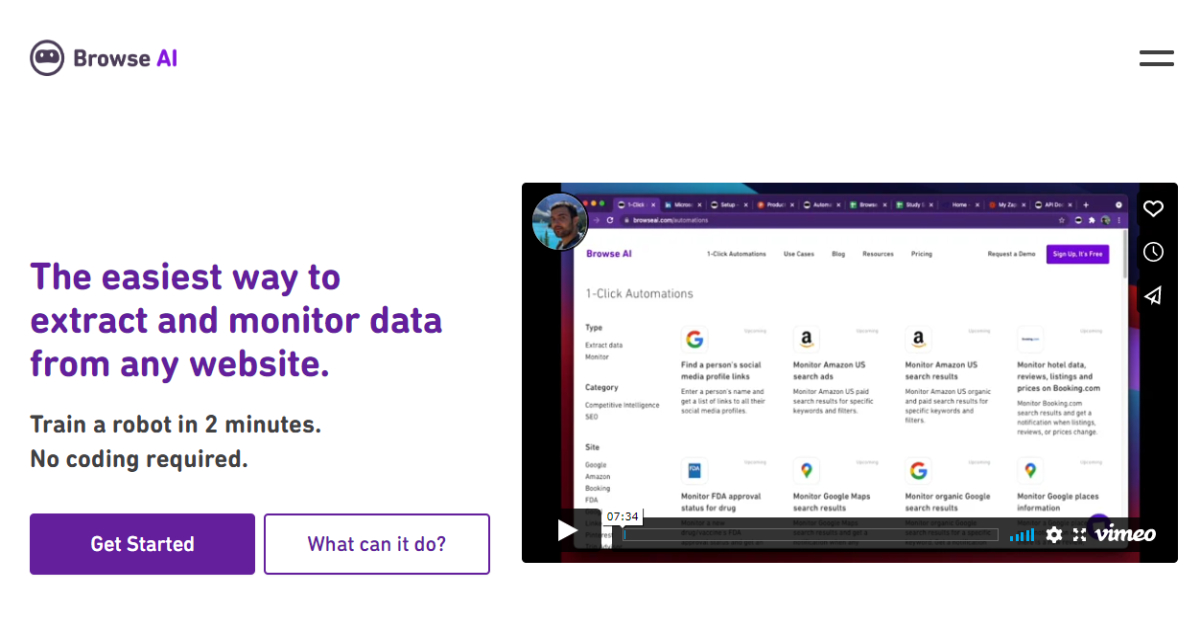

Browse AI

With just a few mouse clicks, you can create a scraping process in Browse AI, and after a short period, you can download a sheet containing all the information you need. On top of that, you can simultaneously monitor a thousand of my most-valued websites with ease using the built-in monitoring tools. Also, you get notified via email if there are any updates to the specified pages.

The flexibility of Browse AI to work with a wide variety of data processing tools is fantastic. Even Google Sheets, which is simple to integrate, could be a good starting point if you’re just getting started. We’ve established that the goal is to reduce the time spent on tasks that would otherwise be completed manually.

Browse AI’s setup process is as quick as performing the desired task for the first time. You only have to turn on the device once to demonstrate the desired procedure. After that point, you only need to collect the desired outcomes, as everything else is handled automatically.

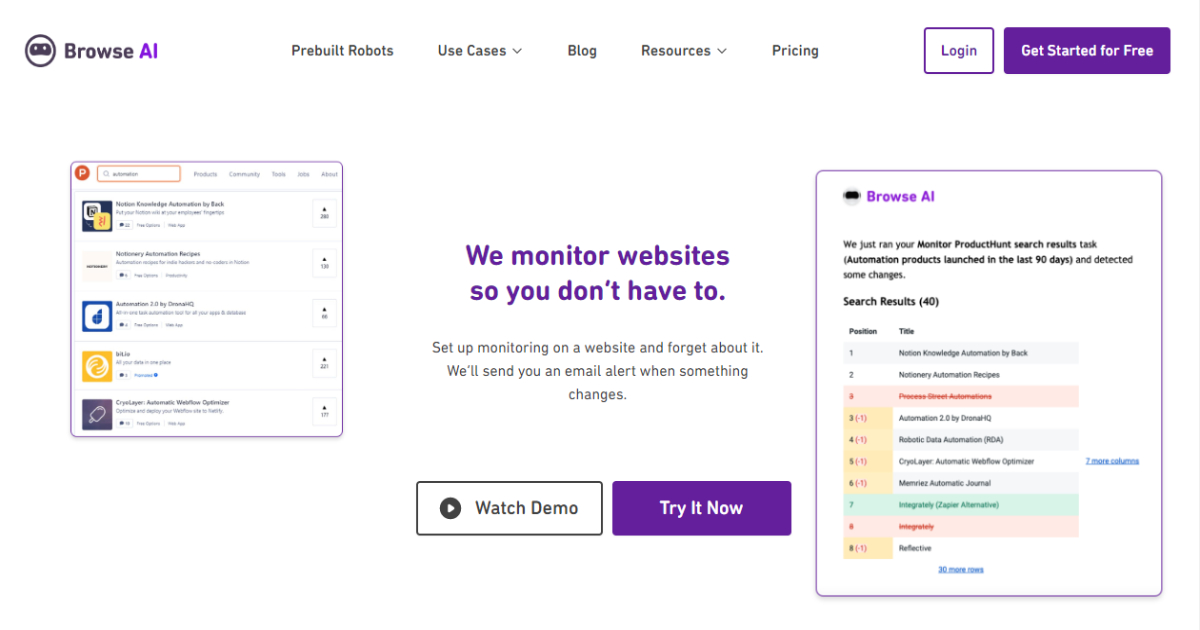

Just one instrument, so many uses

The benefits of scraping are numerous and varied, including but not limited to: price tracking, lead generation, financial analysis, market and competition research, content aggregation, and many others. Since you, the user, define what information you want and from where Browse AI can adapt to any of these quickly.

When you manage all scrapers from a single interface, there’s no longer any reason to maintain separate systems for collecting and analyzing data for different purposes. Since you only need to spend a few minutes getting acclimated to Browse AI once, you’ll be ready to go for any future applications.

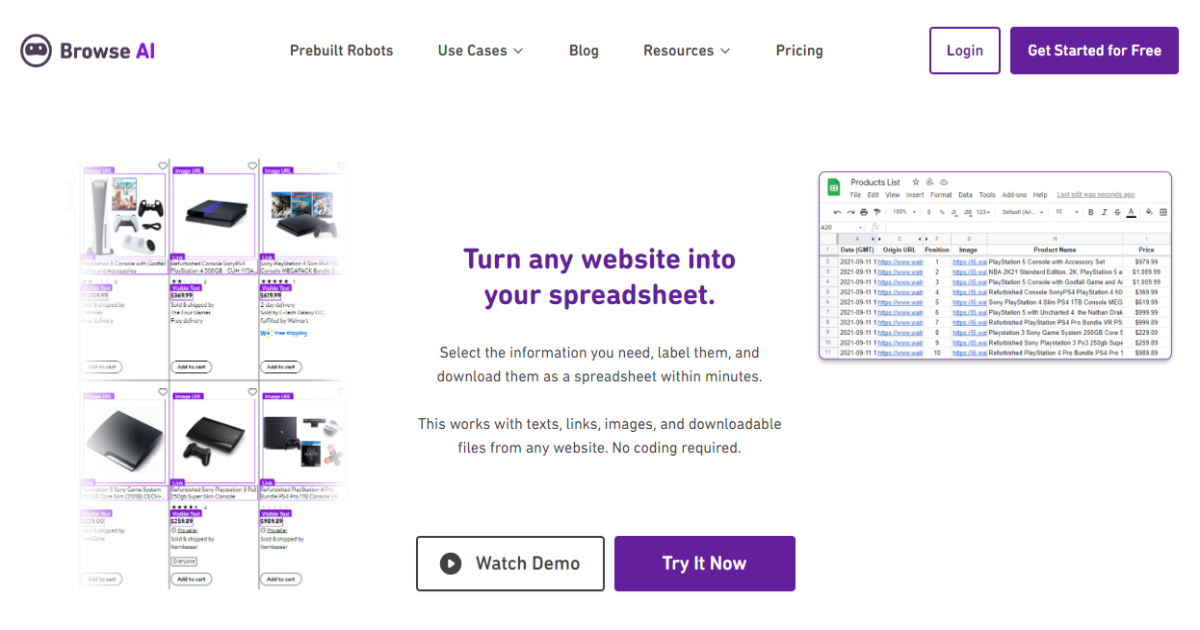

To acquire information, one can either use an API or a spreadsheet.

It’s been established that scraping can produce a wide variety of outcomes. Browse AI is not just accessible to non-technical users, as it integrates with widely-used programs like Google Spreadsheet, Zapier, and AirTable, but programmers can also use it.

You can use application programming interfaces for larger projects where you need direct communication between your website and the scraping results. This is helpful if your eCommerce site pulls information such as pricing, stock levels, and product descriptions from third-party services that don’t offer their APIs.

Combat scraping’s snags

If you’ve ever tried building a scraper, you know expanding can be challenging. Your browser, IP address, and the machine will raise suspicion on many websites. It’s not unusual to encounter captchas that are so challenging to automate that they render the entire process useless.

Thankfully, Browse AI takes care of this on its own by switching between different residential proxies, filling in captchas, and otherwise acting in a human-like manner. This will make the targeted website think that it is a real person searching for data, not a scraper, which in the end, is the goal.

Conclusion

For anyone who needs accurate and current data without doing manual work, Browse AI is a ready-made solution. It is simple to adapt for various websites because you specify what information and from which page you want. This is a significant advantage compared to scrapers designed explicitly for particular platforms and websites.

On the other hand, Browse AI does provide premade robots for well-known platforms like LinkedIn, Amazon, Google Maps, YouTube, and others if you don’t want to invest time in learning how and what. You will need to spend some time defining what you want for smaller websites, but this process only takes a few minutes, and the results are all yours – automatically!