Artificial intelligence has moved from experimental labs into real-world applications that power search engines, recommendation systems, fraud detection tools, medical diagnostics, and autonomous systems. But building a high-performing model is only half the battle. The real challenge often begins after training: deploying the model reliably, securely, and at scale. That’s where AI model deployment platforms come in—bridging the gap between data science notebooks and production-grade applications.

TLDR: AI model deployment platforms help teams move machine learning models from development to production in a reliable, scalable way. They handle infrastructure, versioning, monitoring, and scaling so developers can focus on improving model performance. Popular options include AWS SageMaker, Google Vertex AI, Azure ML, Databricks, and open source tools such as MLflow and Kubeflow. Choosing the right platform depends on your infrastructure, scalability needs, and MLOps maturity.

Why Model Deployment Is So Challenging

Deploying AI models isn’t as simple as uploading a file to a server. Real-world environments introduce complexity that research environments don’t account for:

- Scalability: Can the model handle thousands—or millions—of requests per second?

- Latency: Is inference fast enough for real-time use cases?

- Versioning: How do you track and manage multiple model versions?

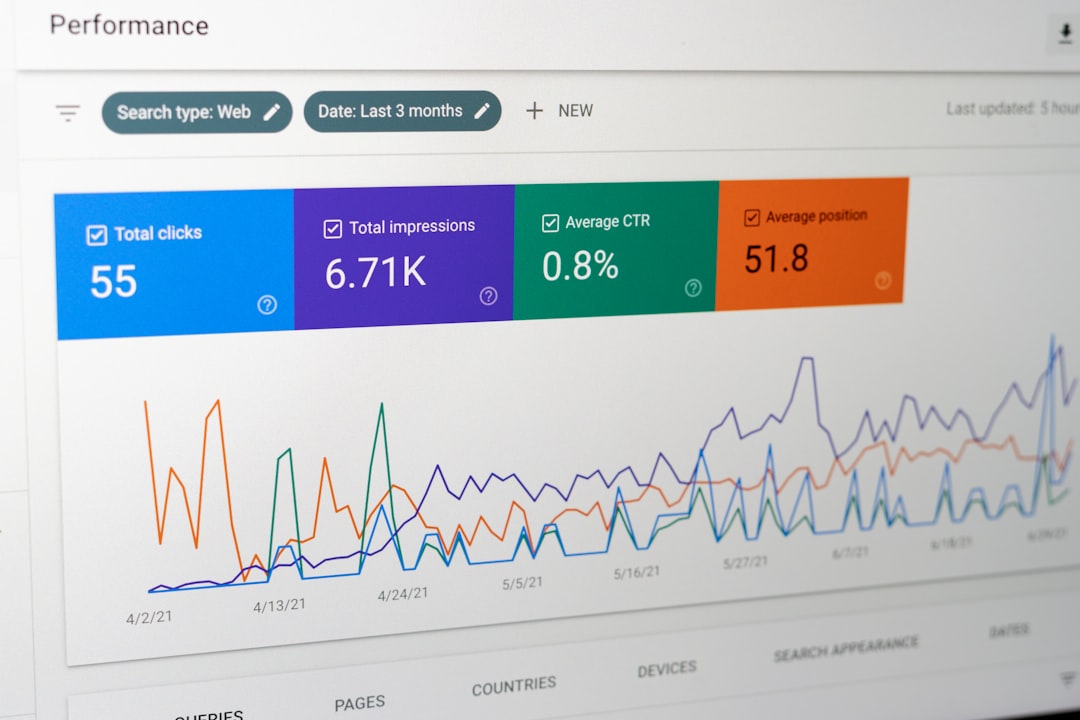

- Monitoring: How do you detect model drift or performance degradation?

- Security & Compliance: Are you meeting regulatory and data protection standards?

Without proper infrastructure, even the most accurate model can fail in production. AI model deployment platforms solve these issues by offering structured environments to operationalize machine learning workflows.

Image not found in postmetaWhat AI Model Deployment Platforms Actually Do

At their core, model deployment platforms provide MLOps capabilities—a set of practices combining machine learning, DevOps, and data engineering.

Most platforms offer:

- Automated Model Packaging – Containerization with Docker or similar technologies

- API Endpoint Creation – Turn a trained model into a REST or gRPC endpoint

- Autoscaling Infrastructure – Adjust compute resources based on traffic

- Continuous Integration and Deployment (CI/CD) – Automate updates safely

- Monitoring and Logging – Track performance, errors, and drift

- A/B Testing and Shadow Deployment – Test new model versions safely

These capabilities transform experimental models into reliable business assets.

Leading AI Model Deployment Platforms

Let’s explore some of the most widely used platforms that help organizations deliver AI models reliably.

1. Amazon SageMaker

Amazon SageMaker is a fully managed service that supports the complete ML lifecycle. It simplifies building, training, and deploying models at scale.

Key strengths:

- One-click deployment to scalable endpoints

- Built-in monitoring with Model Monitor

- Strong integration with AWS ecosystem

- Support for multi-model endpoints to reduce cost

Best for organizations already deeply invested in AWS infrastructure.

2. Google Vertex AI

Vertex AI unifies Google Cloud’s AI tools into a cohesive ecosystem. It excels at managed pipelines, model registry features, and seamless scaling.

Highlights:

- Custom and AutoML model deployment

- Built-in feature store

- Robust monitoring and explainability tools

- Optimized for large-scale AI and LLM workloads

It’s particularly strong in handling large datasets and advanced deep learning workflows.

3. Microsoft Azure Machine Learning

Azure ML offers enterprise-grade tooling for deploying machine learning models with governance and compliance built in.

Advantages:

- Strong security and regulatory compliance features

- Integration with Azure DevOps

- Managed Kubernetes for scalable inference

- Excellent hybrid cloud capabilities

It’s a favorite among large enterprises operating in highly regulated industries.

4. Databricks (with MLflow)

Databricks combines unified data analytics with ML deployment tools. It heavily leverages MLflow for experiment tracking and model lifecycle management.

Why teams choose Databricks:

- Unified analytics and AI platform

- Built-in experiment tracking

- Seamless connection between data engineering and ML

- Model registry for version management

It’s ideal for data-driven organizations seeking tight integration between data pipelines and model deployment.

5. Kubeflow

Kubeflow is an open-source ML toolkit built on Kubernetes. It’s highly customizable and favored by teams that want flexibility and control.

Key features:

- Portable across cloud providers

- End-to-end ML pipelines

- Advanced orchestration capabilities

- Ideal for Kubernetes-native deployments

However, it requires more engineering effort compared to fully managed solutions.

Comparison Chart of Popular Deployment Platforms

| Platform | Best For | Managed Service | Kubernetes Support | Built-in Monitoring | Ease of Use |

|---|---|---|---|---|---|

| Amazon SageMaker | AWS-centric teams | Yes | Yes | Strong | High |

| Google Vertex AI | Large-scale AI workloads | Yes | Yes | Strong | High |

| Azure ML | Enterprise compliance | Yes | Yes | Strong | Medium-High |

| Databricks | Data-driven organizations | Partially | Yes | Moderate | Medium |

| Kubeflow | Custom Kubernetes setup | No | Native | Customizable | Low-Medium |

Critical Features That Ensure Reliability

Reliable model delivery isn’t just about uptime. It’s about maintaining consistent performance over time.

1. Model Versioning

Every deployed model should be versioned. Platforms with model registries make it easy to roll back if issues arise.

2. Automated Scaling

Traffic spikes can crash static systems. Autoscaling ensures your inference endpoints grow and shrink with demand.

3. Monitoring and Drift Detection

Data changes. User behavior shifts. Monitoring systems detect when model accuracy degrades due to concept drift or data drift.

4. Canary and Shadow Deployments

Safely testing new models with a small percentage of traffic minimizes risk before full rollout.

5. Observability and Logging

Comprehensive logs help troubleshoot issues and audit predictions—especially crucial in finance, healthcare, and legal applications.

Cloud vs. On-Premises Deployment

Organizations must decide between cloud-based platforms and on-premise solutions.

Cloud Deployment Benefits:

- Rapid scalability

- Minimal infrastructure management

- Global availability

On-Prem Deployment Benefits:

- Greater data control

- Enhanced privacy compliance

- Custom hardware optimization

Hybrid approaches are increasingly common, especially for industries facing strict regulatory requirements.

Emerging Trends in Model Deployment

The field of AI deployment continues to evolve. Key trends include:

- Serverless Inference: Run models without managing servers explicitly.

- Edge Deployment: Deploy models directly on IoT devices and mobile hardware.

- LLM-Specific Infrastructure: Specialized hosting for large language models.

- Feature Stores: Centralized management of input data features.

- Responsible AI Tooling: Bias detection and explainability built into pipelines.

These innovations reflect a broader shift toward making AI systems more scalable, accountable, and embedded in everyday enterprise workflows.

How to Choose the Right Platform

Selecting the right deployment platform depends on your organization’s specific needs. Consider:

- Existing Cloud Provider – AWS, GCP, or Azure alignment simplifies integration.

- Team Expertise – Kubernetes experience may justify open-source tools.

- Compliance Requirements – Regulated industries should prioritize governance features.

- Budget Constraints – Managed services cost more but reduce engineering overhead.

- Model Complexity – Large-scale deep learning requires GPU-optimized infrastructure.

No single solution fits every organization. The goal isn’t just deployment—it’s sustainable, repeatable, and observable AI operations.

Final Thoughts

AI model deployment platforms are the unsung heroes of modern machine learning. While model architecture and accuracy often steal the spotlight, it’s deployment infrastructure that determines whether an AI solution thrives in production or fails under real-world conditions.

By providing scalable infrastructure, automation, monitoring, and governance tools, these platforms enable teams to deliver models reliably and confidently. As AI continues to integrate deeper into business operations, robust model deployment strategies will no longer be optional—they’ll be foundational.

Ultimately, the future of AI isn’t just about smarter models. It’s about delivering them consistently, safely, and at scale.